Most of my articles for this journal focus on managing the risk of flying piston-powered general aviation aircraft, with examples of good and poor risk management. But risk management is at least equally critical in the world of operating airliners and turbine-powered transport category aircraft. Recent air carrier accidents provide illustration and lessons relevant to operating small general aviation aircraft, especially when designing and certifying them. In fact, and just as during flight operations, the job of managing risk in the design and certification is to identify, assess and mitigate that risk. These procedures apply even more objectively when using rigid design criteria, especially when they involve transport category aircraft.

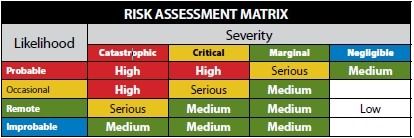

To illustrate this, let’s once again refer to the standard risk assessment matrix, below, which is used to assess risk based on the joint impact of an event’s likelihood and its severity. In the operations world, using this matrix can be somewhat subjective. When applying the “holy trinity” of risk management—identify, assess, mitigate—assessment can be the most difficult of the three functions to perform effectively. Nevertheless, training and practice can enable the average general aviation pilot to assess risk with some degree of accuracy.

Assigning a Value

In the aircraft design and certification world, the measurement of values in this matrix is done empirically and with some precision. Especially for transport category aircraft certificated under FAR Part 25, these values can require design and engineering criteria that can ensure a very low level of overall risk for given hazards. For example, some hazards, such as major structural failures of wings and key airframe components, are obviously catastrophic in nature. To achieve an ultra-low risk level, Part 25 standards require that the probability of such an event occurring must be reduced literally to, at most, one in a billion—commonly termed a “ten to the minus nine” failure. Achieving this means that the overall level of risk for a catastrophic failure would be driven off the bottom end of the first column in our risk assessment matrix to an extremely low level.

Design criteria for small airplanes certificated under FAR Part 23 are not as rigid, but they are generally just as precise. This precision achieves even greater importance under the recent major revision to Part 23, which changed this rule into a “performance-based” regulation that sets risk and performance criteria in place of the prescriptive requirements of the previous version. In other words, the FAA now tells manufacturers the performance level they have to achieve, not how to achieve it.

Supposedly, this will unleash a torrent of low-cost general aviation aircraft to the market. Don’t hold your breath. Aircraft innovation and cost are largely governed by demographics, market decisions, production volume, product liability and other factors besides aircraft certification.

In any case, aircraft design is always considered the first line of defense in an integrated system safety approach to aviation, as well as other transportation modes and other endeavors, such as nuclear power plant operation. Under a system safety approach, the following order ofprecedence is the accepted standard for an integrated safety management system:

• Design for minimum risk;

• Incorporate safety devices;

• Provide warning systems; and

• Develop procedures and training.

Aircraft manufacturers, airlines and operators, regulators and other interests world-wide have adopted this strategy for maintaining and managing the safety of the commercial aviation system. This approach has served us well as it has grown safer, especially over the last 25 years. At least, that is, until recently.

The Boeing 737 MAX

The aviation community, indeed the general public, has been bombarded by the media in the aftermath of two Boeing 737 MAX crashes, both of which occurred outside the U.S. And this magazine’s July 2019 issue looked briefly at some of the aerodynamic issues Boeing engineers likely dealt with. So I’ll keep my recap of those two events to the basics.

At the time of this writing, both accidents remain under investigation, but it seems apparent that the aircrafts’ maneuvering characteristics augmentation system (MCAS) was a primary factor in the crash of both Lion Air Flight 610 in October 2018 and Ethiopian Airlines Flight 302 in March 2019. Additionally, both crashes occurred within months of the 737 MAX’s certification and entrance into service. It is beyond the scope of this article to fully cover the MCAS’s several major flaws. The system was added to the MAX because the airplane’s new larger, efficient, higher-thrust engines required Boeing to mount them higher and more forward on the wing, producing undesirable stall characteristics in certain parts of the aircraft’s operating envelope. The MCAS was designed to reduce the angle of attack in these situations through an automatic application of nose-down horizontal stabilizer trim.

The problems with MCAS apparently began when Boeing drastically redesigned it—after the original design had been submitted to the FAA for approval—to greatly increase the amount of nose-down trim that would be applied when the system activated. Boeing then decided not to completely inform operators about MCAS and how it operated. Oh, and then the system was designed to rely only on one angle of attack (AoA) sensor instead of using both sensors and comparing results. This created a single point of failure in the process.

Is that all? Nope. Boeing also charged extra if the operator wanted a warning system that would alert pilots to a disagreement between AoA sensors (Boeing has since changed its policy and the warning system will now be standard equipment).

All Boeing 737 models are on a common type rating. When an airline pilot transitions from one 737 variant to another, the airline must provide “differences” training. Airlines, of course, hate providing extra (read “expensive”) training and, in any case, were not sufficiently aware of MCAS to know that extra training would be needed. Pilots were similarly in the dark and were never provided training to deal with a malfunctioning MCAS. Both the manufacturer and the airline are keen to minimize this training. The FAA accommodates this by providing five different ascending categories (A through E) of differences training, ranging from merely publishing a manual revision (Level A) to full-blown Level D simulator or aircraft training (Level E). Guess which level of training was involved with MCAS?

So, let’s catalog the risk management and system safety sins committed with these tragic events, using the four design and procedure categories I earlier enumerated. First, it’s clear that Boeing may not have done a complete hazard analysis with respect to MCAS. It is likely it did not anticipate a catastrophic result and/or underrated the likelihood of it occurring. Thus, it bungled the first line of defense: design for minimum risk. Next, it incorporated a safety device, MCAS, that failed to protect against—and even increased the chance of—a loss-of-control event. Third, it failed to provide, as standard equipment, a warning device that would alert pilots to a malfunctioning AOA sensor. Finally, it did not require additional training on the system or even provide any substantial information on MCAS or its potential failure modes.

You might wonder where the FAA was while all this was happening. Under great political pressure, the agency delayed grounding the 737 MAX until after other regulatory authorities took this step and left it with no choice. The FAA has since stated that the 737 MAX will not take to the skies again until they have thoroughly reviewed Boeing’s ultimate fix. At this writing, that date hasn’t been set and recent pronouncements by affected carriers indicate the grounding may extend into 2020.

Of course, much of the original FAA certification work done on the MAX was performed by Boeing’s own employees, legally delegated to do so by FAR Part 183 as “representatives of the Administrator.” There are many people who are critical of this system, as described in the sidebar above.

Pilot Risk Factors Are Important Too

Since our initial discussion involved transport-category aircraft and airlines, let’s start with a discussion of pilot risk factors in airline flying. Huh? How can that be? Aren’t airline pilots the cream of the crop? Well, actually, only “maybe.” Airline pilots have the unfortunate status of being humans, like the rest of us mere mortal pilots. They are subject to the same pilot-related risk factors, both qualification-related and aeromedical, that affect the rest of us. We have recently seen the unfortunate results of pilot failures.

The most tragic example I can cite is the March 2015 crash of Germanwings Flight 9525 on its way from Spain to Germany. The aircraft was deliberately flown into terrain by the co-pilot, after the captain left the flight deck for the rest room. This suicide was brought on by the severe mental illness of the co-pilot, which somehow slipped through the cracks of whatever risk management process existed to screen for such a condition. This tragic outcome was directly related to aeromedical certification while other noteworthy airline accidents such as Colgan Airways Flight 3407 and Air France Flight 447 have their roots in pilot recruitment and certification.

As airplanes become more sophisticated, the flight crew will look even more like the weak link in the risk management process. You might ask, well what about Sully and the Miracle on the Hudson? Didn’t humans save the day there? Actually, the main miracle wasn’t the ditching in the river, which has been done successfully before with transport aircraft including jets, but rather it was the fact that the weather was severe clear. If it had been low IFR, Sully might not have found the river with the relatively primitive moving map on that Airbus A320. In the future, however, and the technology is maturing now, a later Airbus or Boeing model with artificial intelligence will know exactly what must be done and know where to find the Hudson.

Lessons for All of Us

The brave new world of autonomous aircraft described in the sidebar above may one day be routine on airliners. However, it’s likely to come first to a general aviation aircraft you can actually buy. Its contours already exist in military-grade and consumer drones. It could be a long time, however, before this technology really replaces the current general aviation fleet and minimizes or eliminates the need for some of the training it takes today to become a pilot.

In the interim, you’ll need to continue to be an effective risk manager. You will still need to identify, assess and mitigate risks. Remember that, while the airlines inhabit the “ten to the minus nine” world, you’re stuck in a world that is “ten to the minus five,” at best, or one in 100,000.

Supposedly, this will unleash a torrent of low-cost general aviation aircraft to the market. Don’t hold your breath.

Aircraft engineering, design and manufacturing is geared to ensuring operational safety by adherence to certification standards. When that system fails to achieve the desired result, in part because of deception and overzealous attention to the financial bottom line, trusts are betrayed and everyone suffers, directly or indirectly.

The Fox Guarding the Hen House?

On and off over the years, the FAA’s delegation of certification responsibility has been controversial. Today’s delegation system is well-entrenched, and ranges from designated engineering representatives (DERs) to designated pilot examiners (DPEs), as pictured at right.

Under the FAA’s organization designation authorization (ODA), companies must establish a certification unit within the company—supposedly independent, even though the employee salaries are paid by the company—which the FAA is supposed to oversee and evaluate.

The FAA has testified before Congress that its delegation activities save several billion budget dollars. Indeed, the delegation process extends throughout the aviation world: Almost all general aviation pilots take their knowledge and practical tests from designees such as DPEs. Nevertheless, and in the aftermath of the 737 MAX troubles, the agency’s delegation activities will be under outside scrutiny for some time.

There’s a big debate on automation in the cockpit, and it’s all the rage. In my view, the human is clearly the weak link in the safety equation and the technology to prevent another Germanwings 9525—or another Air France 447 or Colgan Air 3407—is already here. Envision, if you will, a near-term world in which three entities will replace the two humans in the airline cockpit. There will be a human pilot, avionics incorporating an advanced artificial intelligence and a ground-based control center—any one of which will be able to return the airliner safely to earth no matter what the situation, even if one of the other two modes screws up. Sure, there could be a failure down the road, but I wager there will be a lot fewer than there are today with two humans at the helm. Will this system get to “ten to the minus nine” reliability? I’m betting someday it will.

Robert Wright is a former FAA executive and President of Wright Aviation Solutions LLC. He is also a 9900-hour ATP with four jet type ratings, and he holds a Flight Instructor Certificate. His opinions in this article do not necessarily represent those of clients or other organizations that he represents.

I am happy to read a well written and concise explanation of the MAX issue. It is curious however to conclude with a HAL9000 screenshot, because I count at least 5 times HAL has destroyed aircraft. I follow the reasoning just so far. A transport cockpit needs 3 humans in it, as it always has. Anything less reduces the fault tolerance to zero because no large aircraft is certificated for single pilot operation. If the best IT people can not stop hacking intrusions at the largest corporations you must be, really must be joking, to allow a system of ground control. So far there have been over a dozen times Tesla self driving computers have caused accidents on becoming confused by emergency vehicle lights. And you want these computers to fly an airplane? I will not be sitting next to you.