Thanks in part to requirements in the new Airman Certification Standards (ACS) for applicants to demonstrate proficiency with it on practical tests, risk management is becoming an integral part of the training process. Outlined in every task of every ACS for certificates and ratings, applicants are evaluated on their ability to identify, assess and mitigate risk. As familiarity with the ACS grows, examiners may require applicants to show their risk analysis during the oral portion of the practical test. This is all well and good, but applicants should also be able to demonstrate risk management proficiency after takeoff, as conditions change the risk picture.

Even today, most pilots have not received any training on risk management. The ACS system is still relatively new, with the first ACS documents issued in 2016. As pilots are learning to do formal risk management, most instructors have not yet learned how to teach it, and most examiners have not yet learned how to test it. To help instructors help their students, the FAA has begun the work of revising its Risk Management Handbook (FAA-H-8083-2), originally issued in 2009.

The FAA’s 3P model for aeronautical decision making is what the agency calls “a simple, practical, and systematic approach that can be used during all phases of flight.” Since it emphasizes using situational awareness to systematically perceive hazards, it’s better suited to in-flight use than when on the ground.

In the first step—Perception—the goal is to develop situational awareness by perceiving hazards that could contribute to an undesired future event. Next, we Process new information to determine if it constitutes risk, a hazard that is not controlled or eliminated. Once we have perceived and processed the hazard, it’s time to Perform whatever actions needed to minimize or eliminate the risk. Given its dynamic nature—it’s similar in concept to the OODA loop—the 3P model is better suited to in-flight use.

A ROUTINE MISSION

One concept the new handbook will emphasize is that the risk management process starts before takeoff but it must continue while in flight. Sometimes, you may have perfectly conducted a risk analysis before flight, with all risks identified, assessed and mitigated, and then encounter unexpected hazards after takeoff that introduce new risks.

This was the case for a Mooney pilot who, one night in November 1993, departed Pontiac, Mich., for Providence, R.I. While it is doubtful this pilot conducted any formal risk analysis, he appeared to comply with the accepted practices and equipment standards for the time and both he and the airplane were “legal” according to the regulations governing the flight. Yet, in the end, it was his failure to apply risk management principles in flight that resulted in a tragic outcome.

There is no evidence that the Mooney pilot conducted a formal risk analysis before takeoff. However, the following analysis, using the PAVE model, can be constructed from the available data.

Pilot

The pilot was instrument-rated and was not only legally current, but presumably proficient, based on his recent flying. There are no data to support the existence of any aeromedical risks either, with one possible exception: The 9:00 pm departure time could certainly result in increased risk from pilot fatigue, but there is no evidence of other pilot-related factors.

Aircraft

The aircraft was equipped for IFR flight, and there was no evidence that any equipment was inoperative when the aircraft departed. The aircraft’s endurance with full fuel was more than enough for the destination and any suitable alternate airports.

Environment

The environment may have produced some elevated risk on this flight. The weather was marginal, it was night, and part of the flight may have been conducted over water. However, these risks were mitigated by filing IFR. The destination airport had complete facilities, including an ILS.

External pressures

The pilot and his passenger may have been under some pressure to complete this flight. It’s not clear from the available data what the exact nature of the business trip was or the proximity of the flight to the business event. External pressures, however, can be insidious and this could have produced pressure to complete this flight late at night. External pressures can also magnify risks in other categories.

The instrument-rated private pilot had nearly 1700 total flight hours, of which 58 were in actual instrument conditions, as well as 175 hours of night flight time. In the previous 147 days, the pilot had flown his 1978 Mooney M20J 201 for about 120 hours, as well as 25 hours in the previous 30 days, having acquired the aircraft several months previously. The 1978 Mooney M20J had about 3000 airframe hours and was typically equipped for a Mooney of that vintage. Other than the addition of a VFR-only Loran-C receiver, it was equipped as it had left the factory. This included two navcomms, one with a glideslope, an ADF, a DME, a marker beacon receiver, a transponder and a basic but capable single-axis autopilot.

The pilot filed an IFR flight plan from Oakland Airport in Pontiac (PTK) to the T.F. Green Airport in Providence (PVD), a straight-line distance of 536 nm. The pilot and his passenger were on a business trip. The departure time was 2058 local and it was November, so the entire flight would be conducted at night.

The pilot received a full weather briefing at 2002. The weather along the route generally offered VFR to marginal VFR weather conditions. However, at Providence the ceiling was 1400 feet overcast. At some point, the Mooney would have to descend through an overcast to land. The Mooney departed Pontiac as planned and proceeded normally for about 01+45.

So far, the flight appeared routine and most pilots would probably maintain that the flight was low risk. Yes, it was night with some marginal weather, but the pilot was qualified to conduct the flight under IFR, and the aircraft was equipped for it.

Overall, we might conclude that, while risks may have been elevated on this flight, the pilot took steps to mitigate them. Indeed, I have made flights like this under similar conditions, as I imagine many readers have. The key, however, is not the risk level at departure, but rather the changes in risk that will occur while this flight progresses.

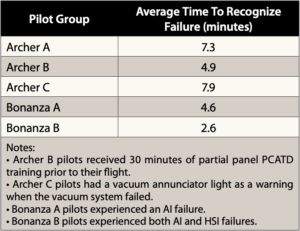

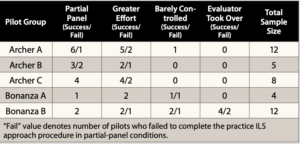

As discussed in this article’s main text, a 2002 study conducted by the AOPA Air Safety Foundation (now Air Safety Institute) and the FAA sought to “collect baseline aircraft data evaluating pilots’ skills in dealing with an unannounced vacuum failure in flight for comparison with results obtained in flight simulators.” Two airplanes were used—a Piper PA-28-181 Archer II and a Beech A36 Bonanza, both equipped with a standby vacuum system and polarized materials blocking the pilot’s vision—by 41 pilots. The basic methodology involved disabling the factory vacuum system and reverting to the standby for takeoff and a practice ILS approach to 800 feet agl. At a predetermined point during the missed approach, the evaluator in the right seat failed the standby vacuum system. The pilot’s recognition time and control inputs were recorded as he/she maneuvered for another ILS. Some succeeded, some didn’t. The tables at right present some of the results.

The study makes three recommendations:

• Training should allow vacuum failure detection and diagnosis in a realistic settings.

• Vacuum failure should be very conspicuous, and instruments should have failure indicators.

• Independent power sources, instrument integration and backup instruments should be considered.

A NEW RISK EMERGES

After proceeding normally for nearly two hours, the risk levels for the Mooney and its pilot changed markedly as a result of a single event. When still nearly 200 miles from Providence, air traffic controllers observed that the Mooney was unable to maintain heading. When queried on this, the pilot replied that he had “lost his vacuum system.” As the flight continued, the controllers told the pilot that if he continued to his destination, instrument meteorological conditions could not be avoided.

At this point, we should stop the action and analyze the full implications of the vacuum pump failure. The following points are critical in understanding how the risk level changed for the Mooney pilot, the implications of this change, issues surrounding partial-panel operation and how proper risk management could have changed the ultimate outcome.

A single-point failure occurred. When the vacuum pump failed, the Mooney pilot lost the attitude indicator, heading indicator and the autopilot. There was no standby vacuum system installed. He was now reduced to a partial panel that relied heavily on the turn coordinator.

The risk level changed instantly. The accident record is clear. When operating under IMC, the likelihood of a loss of control with a partial panel is that it will at minimum probably occur sometime, or “occasionally” on the risk management matrix. The severity of such an event could result in loss of life and the aircraft, or “catastrophic” on the risk management matrix. This high, or red level, risk demands immediate mitigation, yet the pilot did not take such action.

For each hazard you perceive, consider it with this four-step process:

Consequences (e.g., departing after a full workday creates fatigue and pressure)

Alternatives (e.g., delay until morning; reschedule meeting; drive)

Reality (e.g., dangers and distractions of fatigue could lead to an accident)

External pressures (e.g., business meeting at destination might influence me)

A good rule of thumb for the processing phase? If you find yourself saying that it will “probably” be okay, it is definitely time for a solid reality check.

Partial-panel flying in IMC is risky. In conjunction with the FAA’s Office of Aerospace Medicine and its Civil Aerospace Medical Institute (CAMI), the AOPA Air Safety Foundation studied pilots’ reactions to a partial-panel failure. The study found that pilots could usually maintain control of a fixed-gear single, such as the Piper Archer test aircraft in the study, but a significant number of pilots lost control in a high-performance or complex aircraft, such as the Beech Bonanza in their study or the Mooney in this article. The sidebar at the top of the opposite page includes additional details about this study.

Training and testing may be flawed. Although the FAA/AOPA study maintained that proper training could make pilots better able to cope with partial-panel emergencies, I believe this is a double-edged sword. If it encourages pilots to attempt partial-panel operations in IMC, when it could have been avoided, such as was the case with this Mooney, then the training could encourage risky behavior. Both training and testing must emphasize that partial-panel IMC is a full-blown emergency requiring immediate mitigation, such as deviating to visual conditions. Neither the FAA’s Instrument Flying Handbook (FAA-H-8083-15B) nor the current instrument rating ACS do this.

In-flight risk management should have been applied. The Mooney pilot is this article could have applied the CARE model (see the sidebar at the bottom of the opposite page) upon suffering the vacuum pump failure. That is, he could have perceived the consequences, considered his alternatives, recognized the reality that he wouldn’t get to his destination and deviated to an airport with visual conditions while he was still in VMC.

The Mooney pilot didn’t display much concern about his system failure (the NTSB found the vacuum pump’s input shaft had fractured), did not declare an emergency (although he notified ATC) and willingly flew into IMC without working instruments (after overflying better weather). Not only that, but he was maneuvering for a second attempt at an ILS approach (both conducted with no-gyro vectors after ATC offered them) after missing the first one. Yes, such a failure is and should be treated as an emergency.

Short of backup systems and/or instrumentation, what’s a conscientious pilot to do to minimize the risk of a vacuum failure? One place to start is the article beginning on the next page. But it’s also critical that pilot training emphasizes partial-panel operations aboard those aircraft susceptible to failures.

A TRAGIC ENDING

Unfortunately, the Mooney pilot told ATC that IMC was not a problem and he elected to continue toward Providence. While being vectored to the PVD ILS for a second approach attempt, the pilot lost control, the aircraft crashed, and both he and his passenger died.

The NTSB report on this accident typically listed the probable cause as inadequate planning and loss of control. In fact, the true root cause of this accident was poor in-flight risk management. This accident has important lessons for all of us, including:

• There is no substitute for a comprehensive risk analysis before any complex flight, especially in IMC.

• It is important to identify, assess and mitigate risks, and this may also involve analyzing the “what-if” conditions and situations that might apply. For example, what if the vacuum pump fails while I’m in IMC? What other equipment, like the autopilot, might also be rendered inoperable?

• Your risk management processes must continue while you’re airborne, and you must fly the airplane until you have parked it at your destination.

Finally, you might ask why I picked an accident to analyze that occurred 27 years ago. It’s true that I could have picked a more recent one. Unfortunately, there are many to choose from. However, this one resonated because I was the previous owner of that Mooney. I never met the deceased pilot. I sold the airplane to a broker and he sold it to the accident pilot.